With Kafka, you can subscribe to the same data that we use for sending webhook notificationsDocumentation Index

Fetch the complete documentation index at: https://docs.neynar.com/llms.txt

Use this file to discover all available pages before exploring further.

To get entire dataset, Kafka is best paired with one of our other data products (such as Parquet )

Kafka is not suitable to build a database with all of the data from Farcaster day 1. Our kafka topics currently keep data for 14 days. It’s a good solution for streaming recent data in real time (P95 data latency of <1.5s).Why

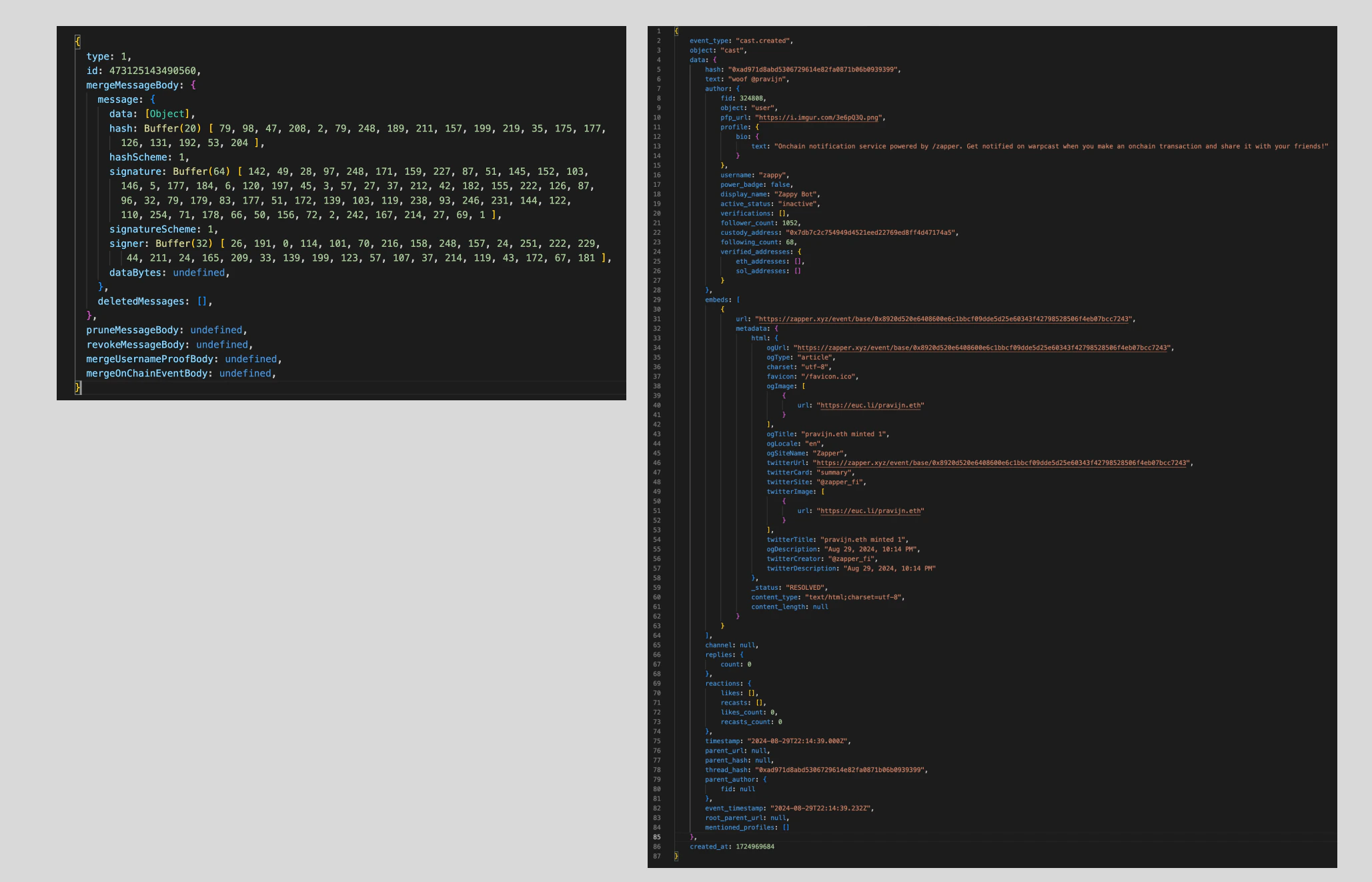

If you’re using Hub gRPC streaming, you’re getting dehydrated events that you have to put together yourself later to make useful (see here for example). With Neynar’s Kafka stream, you get a fully hydrated event (e.g., user.created) that you can use in your app/product immediately. See the example between the gRPC hub event and the Kafka event below.

How

- Reach out, we will create credentials for you and send them via 1Password.

- For authentication, the connection requires

SASL/SCRAM SHA512. - The connection requires TLS (sometimes called SSL for legacy reasons) for encryption.

farcaster-mainnet-eventsis the aggregated topic that contains all events.farcaster-mainnet-user-eventscontainsuser.created,user.updatedanduser.transferredfarcaster-mainnet-cast-eventscontainscast.createdandcast.deletedfarcaster-mainnet-follow-eventscontainsfollow.createdandfollow.deletedfarcaster-mainnet-reaction-eventscontainsreaction.createdandreaction.deletedfarcaster-mainnet-signer-eventscontainssigner.createdandsigner.deleted

farcaster-mainnet-events topic to get all events in one topic.

There are three brokers available over the Internet. Provide them all to your client:

b-1-public.tfmskneynar.5vlahy.c11.kafka.us-east-1.amazonaws.com:9196b-2-public.tfmskneynar.5vlahy.c11.kafka.us-east-1.amazonaws.com:9196b-3-public.tfmskneynar.5vlahy.c11.kafka.us-east-1.amazonaws.com:9196

kcat (formerly kafkacat) to test things locally: